10 releases

| new 0.9.1 | Mar 30, 2025 |

|---|---|

| 0.9.0 | Aug 16, 2024 |

| 0.8.5 | Jun 19, 2024 |

| 0.7.6 |

|

| 0.5.8 | Jun 17, 2024 |

#181 in Machine learning

282 downloads per month

Used in json2bin

1MB

931 lines

RWKV Tokenizer

A fast RWKV Tokenizer written in Rust that supports the World Tokenizer used by the RWKV v5 and v6 models.

Installation

To use rwkv-tokenizer, add the following to your Cargo.toml file:

[dependencies]

rwkv-tokenizer = "0.9.1"

Usage

use rwkv_tokenizer::WorldTokenizer;

let text = "Today is a beautiful day. 今天是美好的一天。";

let tokenizer = WorldTokenizer::new(None).unwrap();

let tokens_ids = tokenizer.encode(text);

let decoding = tokenizer.decode(tokens_ids).unwrap();

println!("tokens: {:?}", tokens_ids);

println!("text: {:?}", text);

println!("decoding: {:?}", decoding);

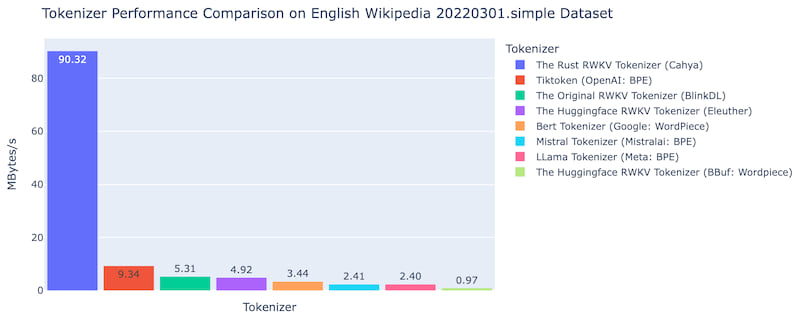

Performance and Validity Test

We compared the encoding results of the Rust RWKV Tokenizer and the original tokenizer using the English Wikipedia and Chinese poetries datasets. Both results are identical. The Rust RWKV Tokenizer also passes the original tokenizer's unit test. The following steps describe how to do the unit test:

We did a performance comparison on the simple English Wikipedia dataset 20220301.en among following tokenizer:

- The original RWKV tokenizer (BlinkDL)

- Huggingface implementaion of RWKV tokenizer

- Huggingface LLama tokenizer

- Huggingface Mistral tokenizer

- Bert tokenizer

- OpenAI Tiktoken

- The Rust RWKV tokenizer

The comparison is done using this jupyter notebook in a M2 Mac mini. The Rust RWKV tokenizer is around 17x faster than the original tokenizer and 9.6x faster than OpenAI Tiktoken.

Changelog

- Version 0.9.1

- Added utf8 error handling to decoder

- Version 0.9.0

- Added multithreading for the function encode_batch()

- Added batch/multithreading comparison

- Version 0.3.0

- Fixed the issue where some characters were not encoded correctly

This tokenizer is my very first Rust program, so it might still have many bugs and silly codes :-)

Dependencies

~3–4.5MB

~78K SLoC