22 major breaking releases

| 46.0.0 | Mar 30, 2025 |

|---|---|

| 45.2.0 | Feb 23, 2025 |

| 44.0.0 | Feb 12, 2025 |

| 43.1.0 | Dec 12, 2024 |

| 20.0.0 | Mar 21, 2023 |

#499 in Database interfaces

478 downloads per month

425KB

9K

SLoC

DataFusion in Python

This is a Python library that binds to Apache Arrow in-memory query engine DataFusion.

DataFusion's Python bindings can be used as a foundation for building new data systems in Python. Here are some examples:

- Dask SQL uses DataFusion's Python bindings for SQL parsing, query planning, and logical plan optimizations, and then transpiles the logical plan to Dask operations for execution.

- DataFusion Ballista is a distributed SQL query engine that extends DataFusion's Python bindings for distributed use cases.

- DataFusion Ray is another distributed query engine that uses DataFusion's Python bindings.

Features

- Execute queries using SQL or DataFrames against CSV, Parquet, and JSON data sources.

- Queries are optimized using DataFusion's query optimizer.

- Execute user-defined Python code from SQL.

- Exchange data with Pandas and other DataFrame libraries that support PyArrow.

- Serialize and deserialize query plans in Substrait format.

- Experimental support for transpiling SQL queries to DataFrame calls with Polars, Pandas, and cuDF.

Example Usage

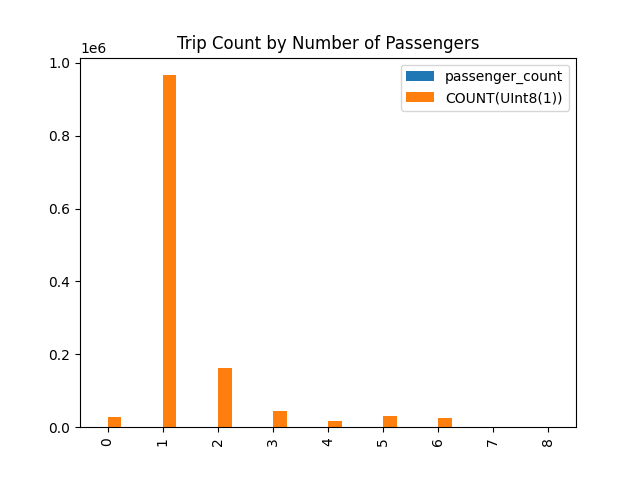

The following example demonstrates running a SQL query against a Parquet file using DataFusion, storing the results in a Pandas DataFrame, and then plotting a chart.

The Parquet file used in this example can be downloaded from the following page:

from datafusion import SessionContext

# Create a DataFusion context

ctx = SessionContext()

# Register table with context

ctx.register_parquet('taxi', 'yellow_tripdata_2021-01.parquet')

# Execute SQL

df = ctx.sql("select passenger_count, count(*) "

"from taxi "

"where passenger_count is not null "

"group by passenger_count "

"order by passenger_count")

# convert to Pandas

pandas_df = df.to_pandas()

# create a chart

fig = pandas_df.plot(kind="bar", title="Trip Count by Number of Passengers").get_figure()

fig.savefig('chart.png')

This produces the following chart:

Registering a DataFrame as a View

You can use SessionContext's register_view method to convert a DataFrame into a view and register it with the context.

from datafusion import SessionContext, col, literal

# Create a DataFusion context

ctx = SessionContext()

# Create sample data

data = {"a": [1, 2, 3, 4, 5], "b": [10, 20, 30, 40, 50]}

# Create a DataFrame from the dictionary

df = ctx.from_pydict(data, "my_table")

# Filter the DataFrame (for example, keep rows where a > 2)

df_filtered = df.filter(col("a") > literal(2))

# Register the dataframe as a view with the context

ctx.register_view("view1", df_filtered)

# Now run a SQL query against the registered view

df_view = ctx.sql("SELECT * FROM view1")

# Collect the results

results = df_view.collect()

# Convert results to a list of dictionaries for display

result_dicts = [batch.to_pydict() for batch in results]

print(result_dicts)

This will output:

[{'a': [3, 4, 5], 'b': [30, 40, 50]}]

Configuration

It is possible to configure runtime (memory and disk settings) and configuration settings when creating a context.

runtime = (

RuntimeEnvBuilder()

.with_disk_manager_os()

.with_fair_spill_pool(10000000)

)

config = (

SessionConfig()

.with_create_default_catalog_and_schema(True)

.with_default_catalog_and_schema("foo", "bar")

.with_target_partitions(8)

.with_information_schema(True)

.with_repartition_joins(False)

.with_repartition_aggregations(False)

.with_repartition_windows(False)

.with_parquet_pruning(False)

.set("datafusion.execution.parquet.pushdown_filters", "true")

)

ctx = SessionContext(config, runtime)

Refer to the API documentation for more information.

Printing the context will show the current configuration settings.

print(ctx)

Extensions

For information about how to extend DataFusion Python, please see the extensions page of the online documentation.

More Examples

See examples for more information.

Executing Queries with DataFusion

- Query a Parquet file using SQL

- Query a Parquet file using the DataFrame API

- Run a SQL query and store the results in a Pandas DataFrame

- Run a SQL query with a Python user-defined function (UDF)

- Run a SQL query with a Python user-defined aggregation function (UDAF)

- Query PyArrow Data

- Create dataframe

- Export dataframe

Running User-Defined Python Code

Substrait Support

How to install

uv

uv add datafusion

Pip

pip install datafusion

# or

python -m pip install datafusion

Conda

conda install -c conda-forge datafusion

You can verify the installation by running:

>>> import datafusion

>>> datafusion.__version__

'0.6.0'

How to develop

This assumes that you have rust and cargo installed. We use the workflow recommended by pyo3 and maturin. The Maturin tools used in this workflow can be installed either via uv or pip. Both approaches should offer the same experience. It is recommended to use uv since it has significant performance improvements

over pip.

Bootstrap (uv):

By default uv will attempt to build the datafusion python package. For our development we prefer to build manually. This means

that when creating your virtual environment using uv sync you need to pass in the additional --no-install-package datafusion

and for uv run commands the additional parameter --no-project

# fetch this repo

git clone git@github.com:apache/datafusion-python.git

# create the virtual enviornment

uv sync --dev --no-install-package datafusion

# activate the environment

source .venv/bin/activate

Bootstrap (pip):

# fetch this repo

git clone git@github.com:apache/datafusion-python.git

# prepare development environment (used to build wheel / install in development)

python3 -m venv .venv

# activate the venv

source .venv/bin/activate

# update pip itself if necessary

python -m pip install -U pip

# install dependencies

python -m pip install -r pyproject.toml

The tests rely on test data in git submodules.

git submodule update --init

Whenever rust code changes (your changes or via git pull):

# make sure you activate the venv using "source venv/bin/activate" first

maturin develop --uv

python -m pytest

Alternatively if you are using uv you can do the following without

needing to activate the virtual environment:

uv run --no-project maturin develop --uv

uv --no-project pytest .

Running & Installing pre-commit hooks

datafusion-python takes advantage of pre-commit to assist developers with code linting to help reduce

the number of commits that ultimately fail in CI due to linter errors. Using the pre-commit hooks is optional for the

developer but certainly helpful for keeping PRs clean and concise.

Our pre-commit hooks can be installed by running pre-commit install, which will install the configurations in

your DATAFUSION_PYTHON_ROOT/.github directory and run each time you perform a commit, failing to complete

the commit if an offending lint is found allowing you to make changes locally before pushing.

The pre-commit hooks can also be run adhoc without installing them by simply running pre-commit run --all-files

Running linters without using pre-commit

There are scripts in ci/scripts for running Rust and Python linters.

./ci/scripts/python_lint.sh

./ci/scripts/rust_clippy.sh

./ci/scripts/rust_fmt.sh

./ci/scripts/rust_toml_fmt.sh

How to update dependencies

To change test dependencies, change the pyproject.toml and run

uv sync --dev --no-install-package datafusion

Dependencies

~85MB

~1.5M SLoC