4 stable releases

| 3.0.2 | Apr 3, 2025 |

|---|---|

| 3.0.1 |

|

| 2.0.0 | Sep 13, 2024 |

| 1.1.0 | Apr 15, 2024 |

| 1.0.0 | Mar 11, 2024 |

#11 in Machine learning

882 downloads per month

100KB

1.5K

SLoC

Want to engage with us ? Join our Discord channel!

Stay updated on new features 📢

Give your insight and suggestion 💬

Get help with configuration and usage 🛠️

Report Bug 🐛

Quick Install ⚡

CLI with cargo 🦀

cargo install code2prompt

SDK with pip 🐍

pip install code2prompt-rs

How is it useful?

Core

code2prompt is a code ingestion tool that streamline the process of creating LLM prompts for code analysis, generation, and other tasks. It works by traversing directories, building a tree structure, and gathering informations about each file. The core library can easily be integrated into other applications.

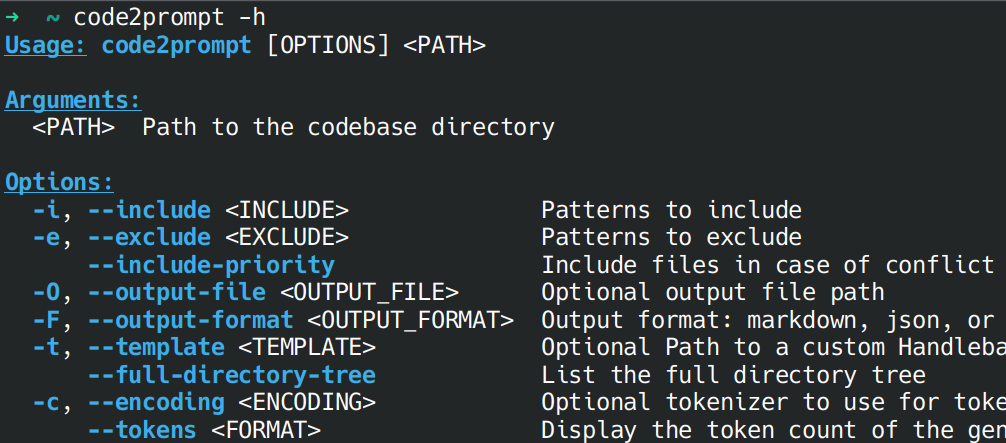

CLI

code2prompt command line interface (CLI) was designed for humans to generate prompts directly from your codebase. The generated prompt is automatically copied to your clipboard and can also be saved to an output file. Furthermore, you can customize the prompt generation using Handlebars templates. Check out the provided prompts in the doc !

SDK

code2prompt software development kit (SDK) offers python binding to the core library. This is perfect for AI agents or automation scripts that want to interact with codebase seamlessly. The SDK is hosted on Pypi and can be installed via pip.

MCP

code2prompt is also available as a Model Context Protocol (MCP) server, which allows you to run it as a local service. This enables LLMs on steroids by providing them a tool to automatically gather a well-structured context of your codebase.

Documentation 📚

Check our online documentation for detailed instructions

Features

Code2Prompt transforms your entire codebase into a well-structured prompt for large language models. Key features include:

- Automatic Code Processing: Convert codebases of any size into readable, formatted prompts

- Smart Filtering: Include/exclude files using glob patterns and respect

.gitignorerules - Flexible Templating: Customize prompts with Handlebars templates for different use cases

- Token Tracking: Track token usage to stay within LLM context limits

- Git Integration: Include diffs, logs, and branch comparisons in your prompts

- Developer Experience: Automatic clipboard copy, line numbers, and file organization options

Stop manually copying files and formatting code for LLMs. Code2Prompt handles the tedious work so you can focus on getting insights and solutions from AI models.

Alternative Installation

Refer to the documentation for detailed installation instructions.

Binary releases

Download the latest binary for your OS from Releases.

Source build

Requires:

git clone https://github.com/mufeedvh/code2prompt.git

cd code2prompt/

cargo install --path crates/code2prompt

Star History

License

Licensed under the MIT License, see LICENSE for more information.

Liked the project?

If you liked the project and found it useful, please give it a ⭐ !

Contribution

Ways to contribute:

- Suggest a feature

- Report a bug

- Fix something and open a pull request

- Help me document the code

- Spread the word

Dependencies

~36–55MB

~1M SLoC