19 stable releases (3 major)

| 4.4.2 | Oct 27, 2024 |

|---|---|

| 4.4.1 | Apr 23, 2024 |

| 4.3.1 | Mar 1, 2024 |

| 4.0.0 | Dec 26, 2023 |

| 1.0.0 | Jul 21, 2023 |

#18 in Memory management

7,721 downloads per month

Used in 19 crates

(13 directly)

185KB

1.5K

SLoC

Talc Allocator

If you find Talc useful, please consider leaving tip via Paypal

What is this for?

- Embedded systems, OS kernels, and other

no_stdenvironments - WebAssembly apps, as a drop-in replacement for the default allocator

- Subsystems in normal programs that need especially quick arena allocation

Why Talc?

- Performance is the primary focus, while retaining generality

- Custom Out-Of-Memory handlers for just-in-time heap management and recovery

- Supports creating and resizing arbitrarily many heaps

- Optional allocation statistics

- Partial validation with debug assertions enabled

- Verified with MIRI

Why not Talc?

- Doesn't integrate with operating systems' dynamic memory facilities out-of-the-box yet

- Doesn't scale well to allocation/deallocation-heavy concurrent processing

- Though it's particularly good at concurrent reallocation.

Table of Contents

Targeting WebAssembly? You can find WASM-specific usage and benchmarks here.

- Setup

- Benchmarks

- General Usage

- Advanced Usage

- Conditional Features

- Stable Rust and MSRV

- Algorithm

- Future Development

- Changelog

Setup

As a global allocator:

use talc::*;

static mut ARENA: [u8; 10000] = [0; 10000];

#[global_allocator]

static ALLOCATOR: Talck<spin::Mutex<()>, ClaimOnOom> = Talc::new(unsafe {

// if we're in a hosted environment, the Rust runtime may allocate before

// main() is called, so we need to initialize the arena automatically

ClaimOnOom::new(Span::from_const_array(core::ptr::addr_of!(ARENA)))

}).lock();

fn main() {

let mut vec = Vec::with_capacity(100);

vec.extend(0..300usize);

}

Or use it as an arena allocator via the Allocator API with spin as follows:

#![feature(allocator_api)]

use talc::*;

use core::alloc::{Allocator, Layout};

static mut ARENA: [u8; 10000] = [0; 10000];

fn main () {

let talck = Talc::new(ErrOnOom).lock::<spin::Mutex<()>>();

unsafe { talck.lock().claim(ARENA.as_mut().into()); }

talck.allocate(Layout::new::<[u32; 16]>());

}

Note that while the spin crate's mutexes are used here, any lock implementing lock_api works.

See General Usage and Advanced Usage for more details.

Benchmarks

Heap Efficiency Benchmark Results

The average occupied capacity upon first allocation failure when randomly allocating/deallocating/reallocating.

| Allocator | Average Random Actions Heap Efficiency |

|---|---|

| Dlmalloc | 99.14% |

| Rlsf | 99.06% |

| Talc | 98.97% |

| Linked List | 98.36% |

| Buddy Alloc | 63.14% |

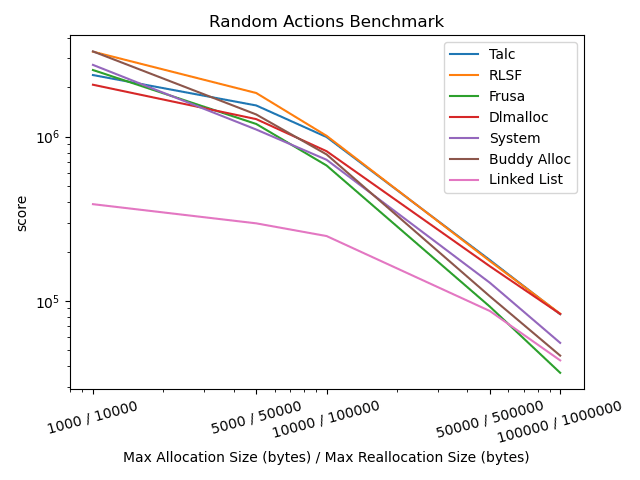

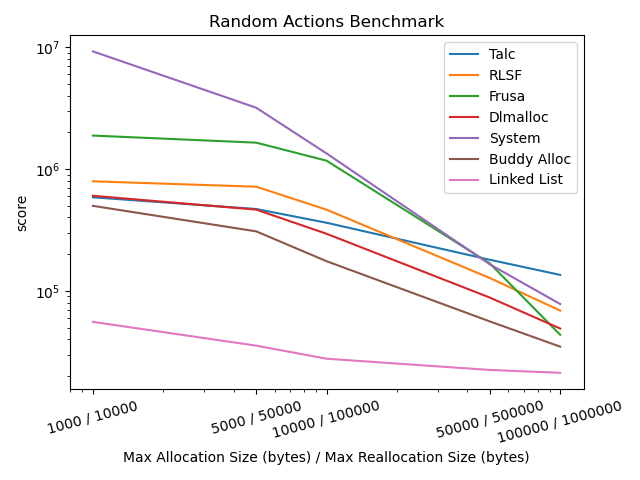

Random Actions Benchmark

The number of successful allocations, deallocations, and reallocations within the allotted time.

1 Thread

4 Threads

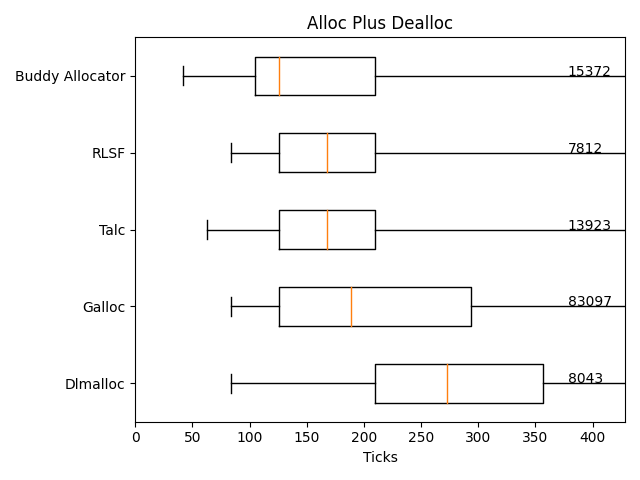

Allocations & Deallocations Microbenchmark

Label indicates the maximum within 50 standard deviations from the median. Max allocation size is 0x10000.

General Usage

Here is the list of important Talc methods:

- Constructors:

new

- Information:

get_allocated_span- returns the minimum heap span containing all allocated memory in an established heapget_counters- if feature"counters"is enabled, this returns a struct with allocation statistics

- Management:

claim- claim memory to establishing a new heapextend- extend an established heaptruncate- reduce the extent of an established heaplock- wraps theTalcin aTalck, which supports theGlobalAllocandAllocatorAPIs

- Allocation:

mallocfreegrowgrow_in_placeshrink

Read their documentation for more info.

Span is a handy little type for describing memory regions, as trying to manipulate Range<*mut u8> or *mut [u8] or base_ptr-size pairs tends to be inconvenient or annoying.

Advanced Usage

The most powerful feature of the allocator is that it has a modular OOM handling system, allowing you to fail out of or recover from allocation failure easily.

Provided OomHandler implementations include:

ErrOnOom: allocations fail on OOMClaimOnOom: claims a heap upon first OOM, useful for initializationWasmHandler: itegrate with WebAssembly'smemorymodule for automatic memory heap management

As an example of a custom implementation, recovering by extending the heap is implemented below.

use talc::*;

struct MyOomHandler {

heap: Span,

}

impl OomHandler for MyOomHandler {

fn handle_oom(talc: &mut Talc<Self>, layout: core::alloc::Layout) -> Result<(), ()> {

// Talc doesn't have enough memory, and we just got called!

// We'll go through an example of how to handle this situation.

// We can inspect `layout` to estimate how much we should free up for this allocation

// or we can extend by any amount (increasing powers of two has good time complexity).

// (Creating another heap with `claim` will also work.)

// This function will be repeatedly called until we free up enough memory or

// we return Err(()) causing allocation failure. Be careful to avoid conditions where

// the heap isn't sufficiently extended indefinitely, causing an infinite loop.

// an arbitrary address limit for the sake of example

const HEAP_TOP_LIMIT: *mut u8 = 0x80000000 as *mut u8;

let old_heap: Span = talc.oom_handler.heap;

// we're going to extend the heap upward, doubling its size

// but we'll be sure not to extend past the limit

let new_heap: Span = old_heap.extend(0, old_heap.size()).below(HEAP_TOP_LIMIT);

if new_heap == old_heap {

// we won't be extending the heap, so we should return Err

return Err(());

}

unsafe {

// we're assuming the new memory up to HEAP_TOP_LIMIT is unused and allocatable

talc.oom_handler.heap = talc.extend(old_heap, new_heap);

}

Ok(())

}

}

Conditional Features

"lock_api"(default): Provides theTalcklocking wrapper type that implementsGlobalAlloc."allocator"(default, requires nightly): Provides anAllocatortrait implementation viaTalck."nightly_api"(default, requires nightly): Provides theSpan::from(*mut [T])andSpan::from_slicefunctions."counters":Talcwill track heap and allocation metrics. UseTalc::get_countersto access them."allocator-api2":Talckwill implementallocator_api2::alloc::Allocatorif"allocator"is not active.

Stable Rust and MSRV

Talc can be built on stable Rust by disabling "allocator" and "nightly_api". The MSRV is 1.67.1.

Disabling "nightly_api" disables Span::from(*mut [T]), Span::from(*const [T]), Span::from_const_slice and Span::from_slice.

Algorithm

This is a dlmalloc-style linked list allocator with boundary tagging and bucketing, aimed at general-purpose use cases. Allocation is O(n) worst case (but in practice its near-constant time, see microbenchmarks), while in-place reallocations and deallocations are O(1).

Additionally, the layout of chunk metadata is rearranged to allow for smaller minimum-size chunks to reduce memory overhead of small allocations. The minimum chunk size is 3 * usize, with a single usize being reserved per allocation. This is more efficient than dlmalloc and galloc, despite using a similar algorithm.

Future Development

- Support better concurrency, as it's the main deficit of the allocator

- Change the default features to be stable by default

- Add out-of-the-box support for more systems

- Allow for integrating with a backing allocator & (deferred) freeing of unused memory (e.g. better integration with mmap/paging)

Update: All of this is currently in the works. No guarantees on when it will be done, but significant progress has been made.

Changelog

v4.4.2

-

polarathene: Replace README relative links with fully-qualified links.

-

polarathene: Improve docs for

stable_examples/examples/std_global_allocator.rs. -

Improved docs for

stable_examples/examples/stable_allocator_api.rsandstable_examples/examples/std_global_allocator.rs. -

Deprecated the

Span::from*function for converting from shared references and const pointers, as they make committing UB easy. These will be removed in v5. -

Fixed up a bunch of warnings all over the project.

v4.4.1

- Added utility function

excepttoSpan, which takes the set difference, potentially splitting theSpan. Thanks bjorn3 for the suggestion!

v4.4.0

- Added feature

allocator-api2which allows using theAllocatortrait on stable via theallocator-api2crate. Thanks jess-sol!

v4.3.1

- Updated the README a little

v4.3.0

-

Added an implementation for

Displayfor the counters. Hopefully this makes your logs a bit prettier.- Bug me if you have opinions about the current layout, I'm open to changing it.

-

Added Frusa and RLSF to the benchmarks.

- Good showing by RLSF all around, and Frusa has particular workloads it excels at.

-

Changed random actions benchmark to measure over various allocation sizes.

v4.2.0

-

Optimized reallocation to allows other allocation operations to occur while memcopy-ing if an in-place reallocation failed.

- As a side effect Talc now has a

grow_in_placefunction that returnsErrif growing the memory in-place isn't possible. - A graph of the random actions benchmark with a workload that benefits from this has been included in the benchmarks section.

- As a side effect Talc now has a

-

Added

Span::from_*andFrom<>functions for const pointers and shared references.- This makes creating a span in static contexts on stable much easier:

Span::from_const_array(addr_of!(MEMORY))

- This makes creating a span in static contexts on stable much easier:

-

Fix: Made

TalckderiveDebugagain. -

Contribution by Ken Hoover: add Talc arena-style allocation size and perf WASM benchmarks

- This might be a great option if you have a known dynamic memory requirement and would like to reduce your WASM size a little more.

-

wasm-sizenow uses wasm-opt, giving more realistic size differences for users of wasm-pack -

Improved shell scripts

-

Overhauled microbenchmarks

- No longer simulates high-heap pressure as tolerating allocation failure is rare

- Data is now displayed using box-and-whisker plots

v4.1.1

- Fix: Reset MSRV to 1.67.1 and added a check to

test.shfor it

v4.1.0 (yanked, use 4.1.1)

- Added optional tracking of allocation metrics. Thanks Ken Hoover for the suggestion!

- Enable the

"counters"feature. Access the data viatalc.get_counters() - Metrics include allocation count, bytes available, fragmentation, overhead, and more.

- Enable the

- Improvements to documentation

- Improved and updated benchmarks

- Integrated the WASM performance benchmark into the project. Use

wasm-bench.shto run (requires wasm-pack and deno) - Improved

wasm-sizeandwasm-size.sh

v4.0.0

- Changed

Talck's API to be more inline with Rust norms.Talcknow hides its internal structure (no more.0).Talck::talc()has been replaced byTalck::lock().Talck::new()andTalck::into_inner(self)have been added.- Removed

TalckRefand implemented theAllocatortrait onTalckdirectly. No need to calltalck.allocator()anymore.

- Changed API for provided locking mechanism

- Moved

AssumeUnlockableintotalc::locking::AssumeUnlockable - Removed

Talc::lock_assume_single_threaded, use.lock::<talc::locking::AssumeUnlockable>()if necessary.

- Moved

- Improvements to documentation here and there. Thanks polarathene for the contribution!

v3.1.2

- Some improvements to documentation.

v3.1.1

- Changed the WASM OOM handler's behavior to be more robust if other code calls

memory.growduring the allocator's use.

v3.1.0

- Reduced use of nightly-only features, and feature-gated the remainder (

Span::from(*mut [T])andSpan::from_slice) behindnightly_api. nightly_apifeature is default-enabled- WARNING: use of

default-features = falsemay cause unexpected errors if the gated functions are used. Consider addingnightly_apior using another function.

- WARNING: use of

v3.0.1

- Improved documentation

- Improved and updated benchmarks

- Increased the range of allocation sizes on Random Actions. (sorry Buddy Allocator!)

- Increased the number of iterations the Heap Efficiency benchmark does to produce more accurate and stable values.

v3.0.0

- Added support for multiple discontinuous heaps! This required some major API changes

new_arenano longer exists (usenewand thenclaim)inithas been replaced withclaimclaim,extendandtruncatenow return the new heap extentInitOnOomis nowClaimOnOom.- All of the above now have different behavior and documentation.

- Each heap now has a fixed overhead of one

usizeat the bottom.

To migrate from v2 to v3, keep in mind that you must keep track of the heaps if you want to resize them, by storing the returned Spans. Read claim, extend and truncate's documentation for all the details.

v2.2.1

- Rewrote the allocator internals to place allocation metadata above the allocation.

- This will have the largest impact on avoiding false sharing, where previously, the allocation metadata for one allocation would infringe on the cache-line of the allocation before it, even if a sufficiently high alignment was demanded. Single-threaded performance marginally increased, too.

- Removed heap_exhaustion and replaced heap_efficiency benchmarks.

- Improved documentation and other resources.

- Changed the WASM size measurement to include slightly less overhead.

v2.2.0

- Added

dlmallocto the benchmarks. - WASM should now be fully supported via

TalckWasm. Let me know what breaks ;)- Find more details here.

v2.1.0

- Tests are now passing on 32 bit targets.

- Documentation fixes and improvements for various items.

- Fixed using

lock_apiwithoutallocator. - Experimental WASM support has been added via

TalckWasmon WASM targets.

v2.0.0

- Removed dependency on

spinand switched to usinglock_api(thanks Stefan Lankes)- You can specify the lock you want to use with

talc.lock::<spin::Mutex<()>>()for example.

- You can specify the lock you want to use with

- Removed the requirement that the

Talcstruct must not be moved, and removed themovfunction.- The arena is now used to store metadata, so extremely small arenas will result in allocation failure.

- Made the OOM handling system use generics and traits instead of a function pointer.

- Use

ErrOnOomto do what it says on the tin.InitOnOomis similar but inits to the given span if completely uninitialized. ImplementOomHandleron any struct to implement your own behaviour (the OOM handler state can be accessed fromhandle_oomviatalc.oom_handler).

- Use

- Changed the API and internals of

Spanand other changes to passmiri's Stacked Borrows checks.- Span now uses pointers exclusively and carries provenance.

- Updated the benchmarks in a number of ways, notably adding

buddy_allocand removingsimple_chunk_allocator.