2 unstable releases

| 0.2.0 | May 1, 2019 |

|---|---|

| 0.1.0 | Mar 25, 2019 |

#24 in #resp

Used in meilies-client

57KB

1.5K

SLoC

MeiliES

A Rust based event store using the Redis protocol

Introduction

This project is no more maintained, please consider finding another tool instead.

MeiliES is an Event Sourcing database that uses the RESP (REdis Serialization Protocol) to communicate. This way it is possible to create clients by reusing the already available protocol. For example, it is possible to use the official Redis command line interface program to communicate with MeiliES. There is a release blog post if you want to know more.

An event store is like a Kafka or a Rabbit MQ but it stores events on disk indefinitely. The first purpose of the server is to publish events of a stream to all subscribed clients, note that events are saved in reception order. A client can also specify from which event number (incrementing) it wants to read, therefore it is possible to recover from crashing by reading and reconstructing a state with only new events. Keep in mind that a message queue is not made for event-sourcing.

Features

- Event publication

- TCP stream subscriptions from an optional event number

- Resilient connections (reconnecting when closed)

- Redis based protocol

- Full Rust, using sled as the internal storage

- Takes near 2min to compile

Building MeiliES

To run MeiliES you will need Rust, you can install it by following the steps on https://rustup.rs.

Once you have Rust in your PATH you can clone and build the MeiliES binaries.

git clone https://github.com/meilisearch/MeiliES.git

cd MeiliES

cargo install --path meilies-server

cargo install --path meilies-cli

Basic Event Store Usage

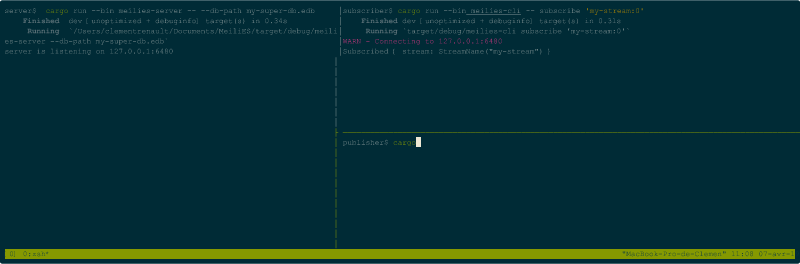

Once MeiliES is installed and available in your PATH, you can run it by executing the following command.

meilies-server --db-path my-little-db.edb

There is now a MeiliES server running on your machine and listening on 127.0.0.1:6480.

In another terminal window, you can specify to a client to listen to only new events.

meilies-cli subscribe 'my-little-stream'

And in another one again you can send new events. All clients which are subscribed to that same stream will see the events.

meilies-cli publish 'my-little-stream' 'my-event-name' 'Hello World!'

meilies-cli publish 'my-little-stream' 'my-event-name' 'Hello Cathy!'

meilies-cli publish 'my-little-stream' 'my-event-name' 'Hello Kevin!'

meilies-cli publish 'my-little-stream' 'my-event-name' 'Hello Donut!'

But that is not a really interesting usage of Event Sourcing, right?! Let's do a more interesting usage of it.

Real Event Store Usage

MeiliES stores all the events of all the streams that were sent by all the clients in the order they were received.

So let's check that and specify to one client the point in time where we want to start reading events. Once there are no more events in the stream, the server starts sending events at the moment it receives them.

Stream name specification

A stream name is composed as follow.

{name}{:from}{:to}

- name: the name of the stream, case sensitive, must not contain space (prefer dash-separated words).

- from: Specifies the first event number to start reading from. Optional, if it's not set MeiliES, will start from the end.

- to: Specifies the last event number to send (exclusive range). Optional value, will never stop if it's not given.

Examples

We can do that by prepending the start event number separated by a colon.

meilies-cli subscribe 'my-little-stream:0'

In this example, we will start reading from the first event of the stream. But we can also specify to start reading from the third event too.

meilies-cli subscribe 'my-little-stream:2'

Or from events which do not exists yet! Try sending events to this stream and you will see: only events from the fifth one will appear.

meilies-cli subscribe 'my-little-stream:5'

It's also possible to read a stream until an event number.

meilies-cli subscribe 'my-little-stream:3:5'

Current Limitations

The current implementation has some limitations related to the whole number of streams subscribed. The problem is that one thread is spawned for each stream and for each client. For example, if two clients subscribe to the same stream, the server will spawn two threads, one for each client instead of spawning only one thread and sending new events to a clients pool.

Even uglier, if a client is closing the connection, the spawned threads will not stop immediately but after some stream activity.

Support

For commercial support, drop us an email at bonjour@meilisearch.com.

Dependencies

~4MB

~59K SLoC