2 unstable releases

| 0.2.0 | Feb 24, 2024 |

|---|---|

| 0.1.0 | Jan 30, 2023 |

#70 in #load

6,018 downloads per month

26KB

263 lines

Little Loadshedder

A Rust hyper/tower service that implements load shedding with queuing & concurrency limiting based on latency.

It uses Little's Law to intelligently shed load in order to maintain a target average latency. It achieves this by placing a queue in front of the service it wraps, the size of which is determined by measuring the average latency of calls to the inner service. Additionally, it controls the number of concurrent requests to the inner service, in order to achieve the maximum possible throughput.

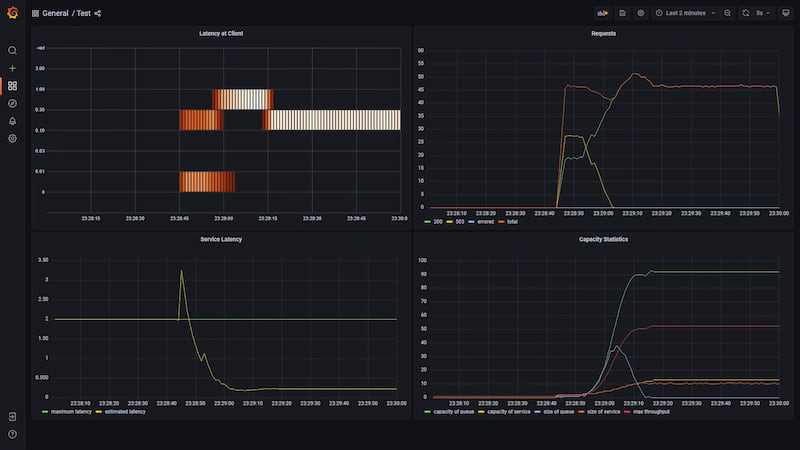

The following images show metrics from the example server under load generated by the example client.

First, when load is turned on, some requests are rejected while the middleware works out the queue size, and increases the concurrency.

This quickly resolves to a steady state where the service can easily handle the load on it, and so the queue size is large.

This quickly resolves to a steady state where the service can easily handle the load on it, and so the queue size is large.

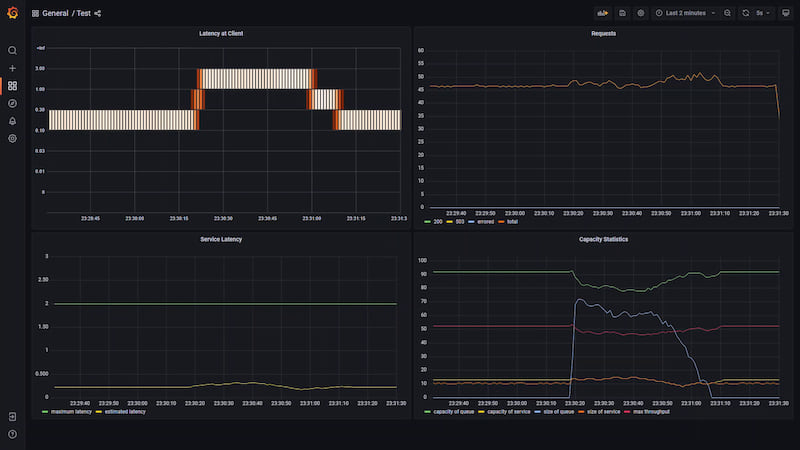

Next, we send a large burst of traffic, note that none of it is dropped as it is all absorbed by the queue.

Once the burst stops, the service slowly clears it's backlog of requests and returns to the steady state.

Once the burst stops, the service slowly clears it's backlog of requests and returns to the steady state.

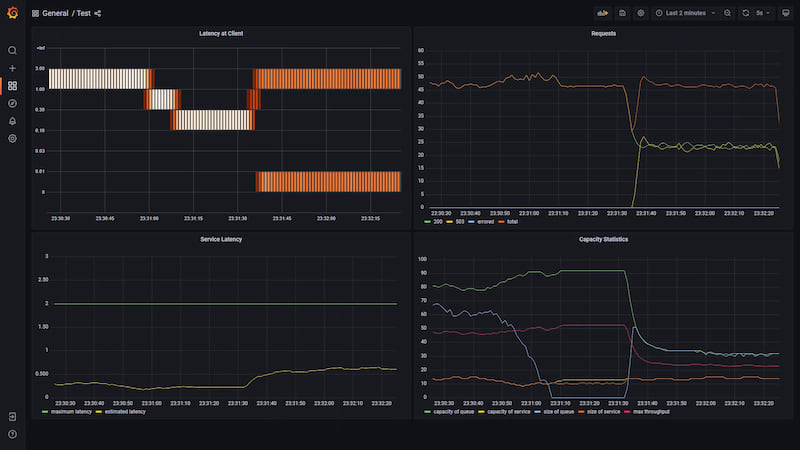

Now we simulate a service degradation, all requests are taking twice as long to process.

The queue shrinks to about half its original size in order to hit the target average latency, however the service cannot acheive this throughput any longer so the queue fills and requests are rejected.

Note that from the client's point of view requests are either immediately rejected or complete at roughly the target latency.

Note that from the client's point of view requests are either immediately rejected or complete at roughly the target latency.

Now the service degrades substantially. The queue shrinks to almost nothing and the concurrency is slowly reduced until the latency matches the goal.

Finally, the service recovers, the middleware rapidly notices and returns to it's inital steady state.

License

Licensed under either of

- Apache License, Version 2.0 (LICENSE-APACHE or http://www.apache.org/licenses/LICENSE-2.0)

- MIT license (LICENSE-MIT or http://opensource.org/licenses/MIT)

at your option.

Contribution

This project welcomes contributions and suggestions, just open an issue or pull request!

Unless you explicitly state otherwise, any contribution intentionally submitted for inclusion in the work by you, as defined in the Apache-2.0 license, shall be dual licensed as above, without any additional terms or conditions.

Dependencies

~3–10MB

~88K SLoC